Introduction

Large Language Models (LLMs) such as Chat-GPT are marvels of modern IT systems. But the truth is, nobody really understands how they are actually working under the hood. Questions abound: Why do they hallucinate? How can they respond accurately and reliably to queries based on proprietary data? How do we overcome limitations posed by the context window size when dealing with large input data?

Having some ideas how LLMs are working could help not only computer scientists and AI-engineers but every one of us trying to get things done in everyday business life with the help of our new AI assistants. This article aims to shed light on some key concepts at a high level, enabling more productive interactions with LLMs for users of any technical skill level. It particularly focuses on how LLMs operate in a “Latent Space” and how “Sparse Priming Representations (SPRs)” can be used to elicit better responses. SPRs significantly aid in crafting compact, fact-rich custom instructions, enhancing agent development, and bolstering knowledge retrieval systems. Additionally, I will share my GPT-Agent for the effortless creation of SPRs.

The Concept of Latent Space

The “Latent Space” concept provides insights into LLM workings. The term “latent” refers to the potential to find the right association within its “space”, a knowledge-rich, high-dimensional network of internal neural connections. These connections encode all the concepts and relations derived from vast training data. This Latent Space is not just a repository of knowledge but also encompasses latent abilities like reasoning and planning.

This mental image, a multidimensional and interconnected representation of world knowledge encoded in the Latent Space, helps us understand LLM responses better. For instance, short and generic requests trigger limited aspects in the LLM, resulting in basic responses. Conversely, “Role Prompting” (e.g., “Act like an XYZ expert”) effectively primes the network with relevant aspects, enhancing the richness of responses even to short queries.

Priming with Sparse Representation

The Latent Space concept also offers a way to optimize interactions with LLMs: systematic priming with fact-rich but compact input designed to trigger many related elements of the Latent Space is the key. Here, “Sparse Priming Representation (SPR)” comes into play, enabling such activation.

This process is akin to how cognitive cues in human thought help us reconstruct memories from sparse associations in our brains. For example, a specific smell can trigger long-forgotten childhood memories. Feeding SPRs into an LLM interaction is paralleling human memory systems such as episodic and semantic memory. It retrieves information through sparse, contextual recall, as essential elements trigger the reconstruction of missing data from that Latent Space and not only from the input data. This approach is particularly beneficial for AI-driven information management systems like in Retrieval-Augmented Generation (RAG).

Benefits and Applications of SPR

Employing SPRs in interactions with LLMs constructs minimal yet precise cues for efficient and accurate task execution across various language tasks. On average, SPRs achieve a compression ratio of 1 to 10 over raw input text while preserving important associations. Creating an SPR, a form of lossy compression, significantly reduces the token count processed by an LLM. Using SPRs in custom instructions yields far superior results as they activate richer responses from the Latent Space of potential answers.

Implementation SPR in LLMs

A crucial question remains: How do we construct SPRs from raw input? Of course we utilize an LLM to compress messy human input into clean, non-overlapping, unique, short, clear, and accurate SPRs. The best way to get the essence of the concept of a SPR is to explain it in the form of a SPR itself:

- Sparse Priming Representation (SPR): technique in advanced NLP, NLU, NLG

- Distills complex information into concise elements

- Elements: unique, non-overlapping statements, concepts, analogies

- Focus: clarity, conceptual accuracy

- Designed for language model processing, not human readability

- Aims for depth and nuance with minimal tokens

- Used to activate diverse aspects in LMM conversations

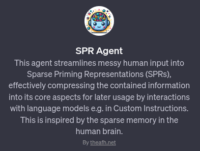

In order to save the reader the hassle of creating appropriate prompts, I have developed a chatbot with OpenAIs new GPT feature. Its name is SPR-Agent and it can process everything ChatGPT can process: including screenshots and text files, transforming them into a list of SPRs for versatile use:

In order to save the reader the hassle of creating appropriate prompts, I have developed a chatbot with OpenAIs new GPT feature. Its name is SPR-Agent and it can process everything ChatGPT can process: including screenshots and text files, transforming them into a list of SPRs for versatile use:

- Incorporate SPRs into custom instructions for enriched responses in generic chat interactions

- Save processing time in NLP tasks executed by LLMs

- Reduce token counts in paid API requests

- Use in RAG systems for faster and more cost-effective responses: invest processing overhead once and get faster and cheaper responses often

- Get faster model alignment when fine tuning with lot of messy data

Generally SPRs effectively maximize information within limited context windows. Because, let’s not kid ourselves, even if prompt widows get bigger over time, it’s like with computer RAM: you can never have enough as data is growing faster than processing capacity.

Conclusion

In summary, Sparse Priming Representations significantly enhance interactions with LLMs, symbolizing the ongoing evolution of our relationship with such models. For business leaders, tech innovators, and everyday users of LLMs, understanding and leveraging SPRs can be a transformative factor in fully harnessing the capabilities of language models in real-world applications.